A team of engineering students from the University of Antwerp are building a humanoid robot that will have the ability to translate speech into sign language. Sponsored by the European Institute for Otorhinolaryngology, the robot titled Project Aslan aims to support the short supply of sign language interpreters across the world.

The project uses 3D printing combined with readily available components to make the robot affordable and easily manufacturable. Using Protolabs Network, a network of 3D printing services the Aslan robot will be able to be produced in over 140 countries.

The project started in 2014, when three masters students (Guy Fierens, Stijn Huys and Jasper Slaets) saw there was a large communication gap between the hearing and Deaf communities. They felt modern technologies could offer a solution to help bridge that gap, especially for situations where there continues to be a lack of support for the Deaf community. Stijn Huys outlines the beginning of the project:

I was talking to friends about the shortage of sign language interpreters in Belgium, especially in Flanders for the Flemish sign language. We wanted to do something about it. I also wanted to work on robotics for my masters, so we combined the two.

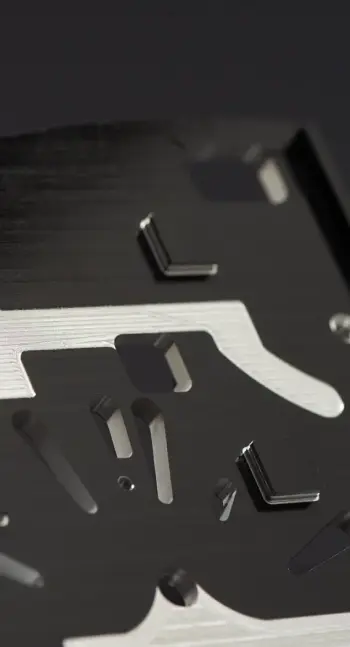

Acquiring the help of further students, a robotics teach and an ENT Surgeon work progressed. Now 3 years later from a simple idea Project Aslan is in its first iteration, a 3D printed robotic arm that has the ability to convert text into sign language including finger spelling and counting. ASLAN is an abbreviation which stands for: “Antwerp’s Sign Language Actuating Node”, which reflects its meaning as connection between the hearing and the hearing-impaired.

When manufacturing the Aslan robot, the team needed an affordable and scalable manufacturing solution that was accessible all over the world. Protolabs Network currently connects over 1 billion people within 10 miles of a 3D printer, so it made sense to partner to utilise this technology and network. 3D printing was also used as it allows the easy replacement of parts that might break after extensive use and updated parts can easily be printed when available.

The first prototype featured 25 3D printed parts, which took a total of 139 hours to print. In addition to these 25 parts, 16 servo motors, 3 motor controllers, an Arduino Due and a number of other components were necessary to fully assemble the robot. The assembly of the complete arm takes around 10 hours. The Aslan robot works by receiving information from a local network, and checking for updated sign languages from all over the world. Users connected to the network can send messages, which then activate the hand, elbow and finger joints to process the messages.

The robot was printed with PLA-filament on a desktop 3D printer thanks to it being the most common material used on desktop 3D printers with the right material properties for their application. Desktop 3D printing was also used as its the most accessible and affordable manufacturing technology worldwide.

📍 Download our free engineering guide for 3D printing here

The goal of Project Aslan is not to replace human sign language translators but to merely provide support when they are not available. Once the designs are optimized, the robot can be used in numerous practical applications that along with general assistance can attempt to solve the root of the problem. The root problem is that sign language courses are sparse resulting in a shortage of the necessary amount of translators. The Aslan robot can be used to help teach sign language with a human teacher thereby expanding the capacity of these classes.

Erwin Smet, Robotics Teachers outlines the possible applications of Project Aslan:

A deaf person who needs to appear in court, A deaf person following a lesson in a classroom somewhere. These are all circumstances where a deaf person needs a sign language interpreter, but where often such an interpreter is not readily available. This is where a low-cost option, like Aslan can offer a solution.

The future of the project is set, with four new research topics to be picked up by incoming masters students. Two of them focus on optimising the current design to create a 2-arm setup. The third topic covers facial expressions and the implementation of an expressive face to the design.

The final topic is to investigate whether or not a webcam can be used as a modality to teach new gestures to the robot. Sign language is performed using the entire body, with both arms, shoulders and face. Using a webcam to detect the movements of the larger joints and detect facial expressions will aid in gesture development.

Once the mechanical design and software of the robot have reached a sufficiently advanced level, all designs will be made open source for everyone to use.

Check out our other Student Grant projects:

- A 3D printed keyboard for the blind

- A functional prototype of the world’s fastest underwater jetpack

- A modular panoramic 3D printed camera

Or start applying now to the Protolabs Network Student Innovation Grant 2020. The deadline for submissions is on June 28, 2020, 23:59 CET.